"WTF are AI Agents?"

If you're quietly asking yourself this, you're not alone. When 20 of my closest friends carved out an evening to ask me this question, I knew something had shifted.

A group of mates, a private library, and a question about the future

A few weeks ago, 20 of my closest friends asked me to give them a talk on AI agents.

I need to give you some context on this group. This is my closest group of school mates, who 21 years later, still find any excuse to play sport, travel and drink beers together. We support one another in our careers, of course, but the discourse rarely evolves into deep technical work topics. Our work and home lives are intensive enough, therefore we choose to spend our time together away from the desk, doing less serious things. That is how this group works, and it’s also what makes it special.

So when they asked me, out of the blue, to come and present to them on Artificial Intelligence, I paused. This was not the kind of thing we do, we are not the group of people who sit through dry presentations for fun.

But something had shifted. They had been reading my writing, following along with the changes playing out in society, and they wanted to go deeper. They wanted to understand what was actually happening, not just read about it.

The fact that this group, unprompted, carved out an evening to sit down and learn about AI agents tells you everything about where the general public’s awareness is right now. People can feel the ground moving. They just don’t have a framework for understanding it yet.

We held the session at one of our mate’s offices, in a private library setting. Everyone sat around with laptops open, beers in hand, ready to go. I expected heckling. If you knew this group, you would too. We love giving each other a hard time. But I had to remind myself: they invited me here. They chose to spend their evening on this. When I got up to present, they were surprisingly well-behaved. Engaged. Taking notes. Asking genuine questions.

That engagement told me something important.

The temperature of the room

Before the night, I sent out a survey to understand where everyone was at. All twenty filled it out. The results were a snapshot of the broader population, compressed into a room of intelligent, well-educated professionals working across knowledge-intensive industries.

Here is what stood out from the survey:

58% of the group flagged data privacy and security as their number one concern.

52% were worried about hallucinations.

A large number said they simply “did not know where to start”.

Here is the data point that stopped me: out of 20 highly capable people, only 1 or 2 actually understood what an AI agent was in practical terms. They were the ones experimenting, backing up company data and using it as context to let AI automate parts of their work. The rest were intelligent, curious, and eager to learn, but they had not yet crossed the line from using AI as a chatbot to deploying it as an agent.

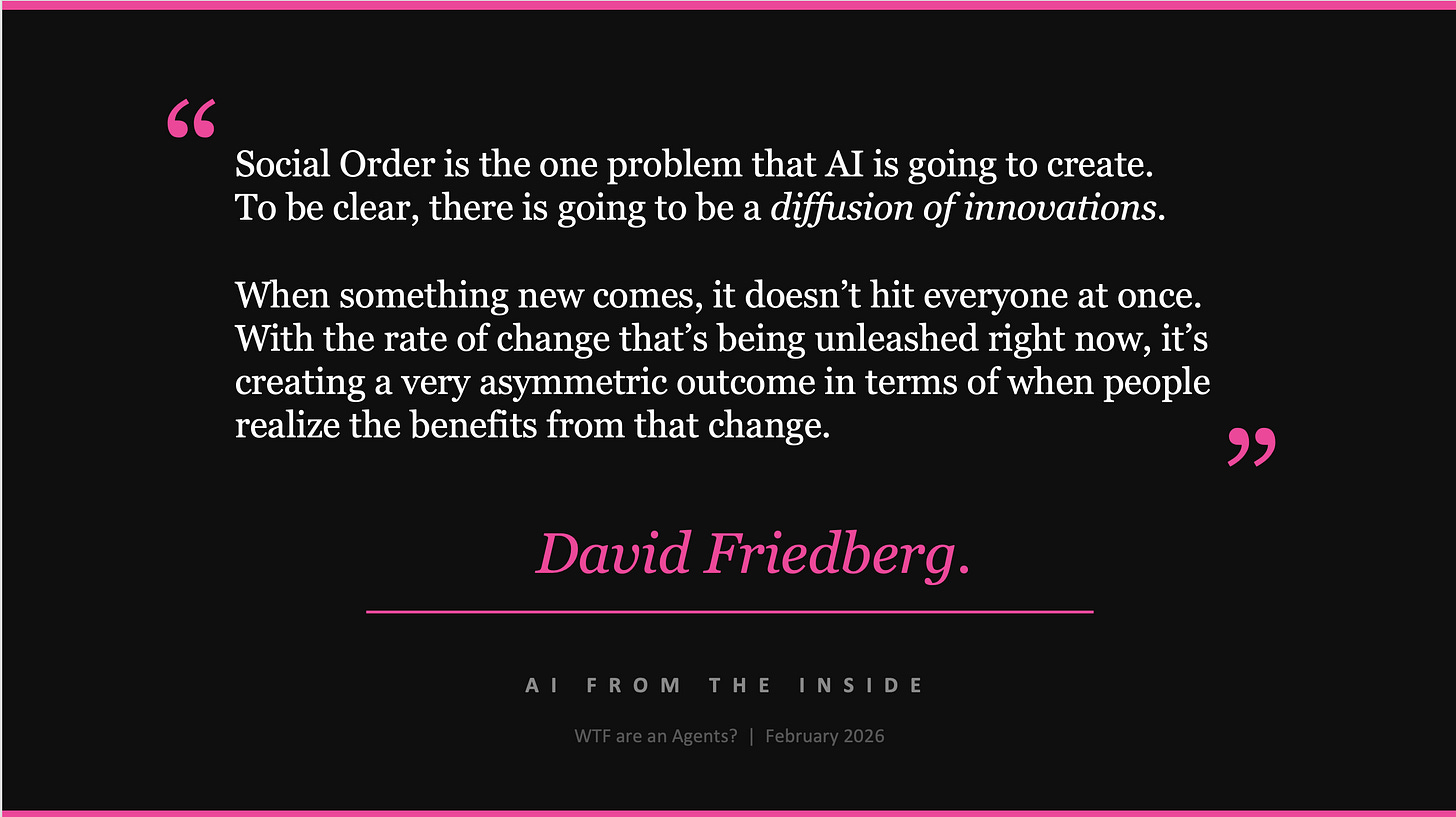

I want to be clear: this does not make the others incapable. Far from it. It makes them a perfect proxy for society. By conservative estimations, less than 5% of the population truly understands what is unfolding here. Without guidance, without a framework for understanding this technology, the general population risks falling behind.

This article is my attempt to give you the same talk I gave my mates that night. What AI agents are. How they work. What they mean for the future of your work.

Let’s get into it.

The Opening Message:

The first thing to understand is that in this brave new world of highly capable AI Agents, there are absolutely going to be winners and losers. Your job, and your opportunity, is to be curious and get on the right side of the ledger.

The following quote from David Friedberg, on a recent episode of the All-In Podcast, put it best:

So what is the change that's creating this asymmetry? It starts with understanding the difference between the AI most people are using today and what has quietly become possible with Agents. The gap between those two things is where the opportunity lives.

The Big Leap: from Chatbots to Agents

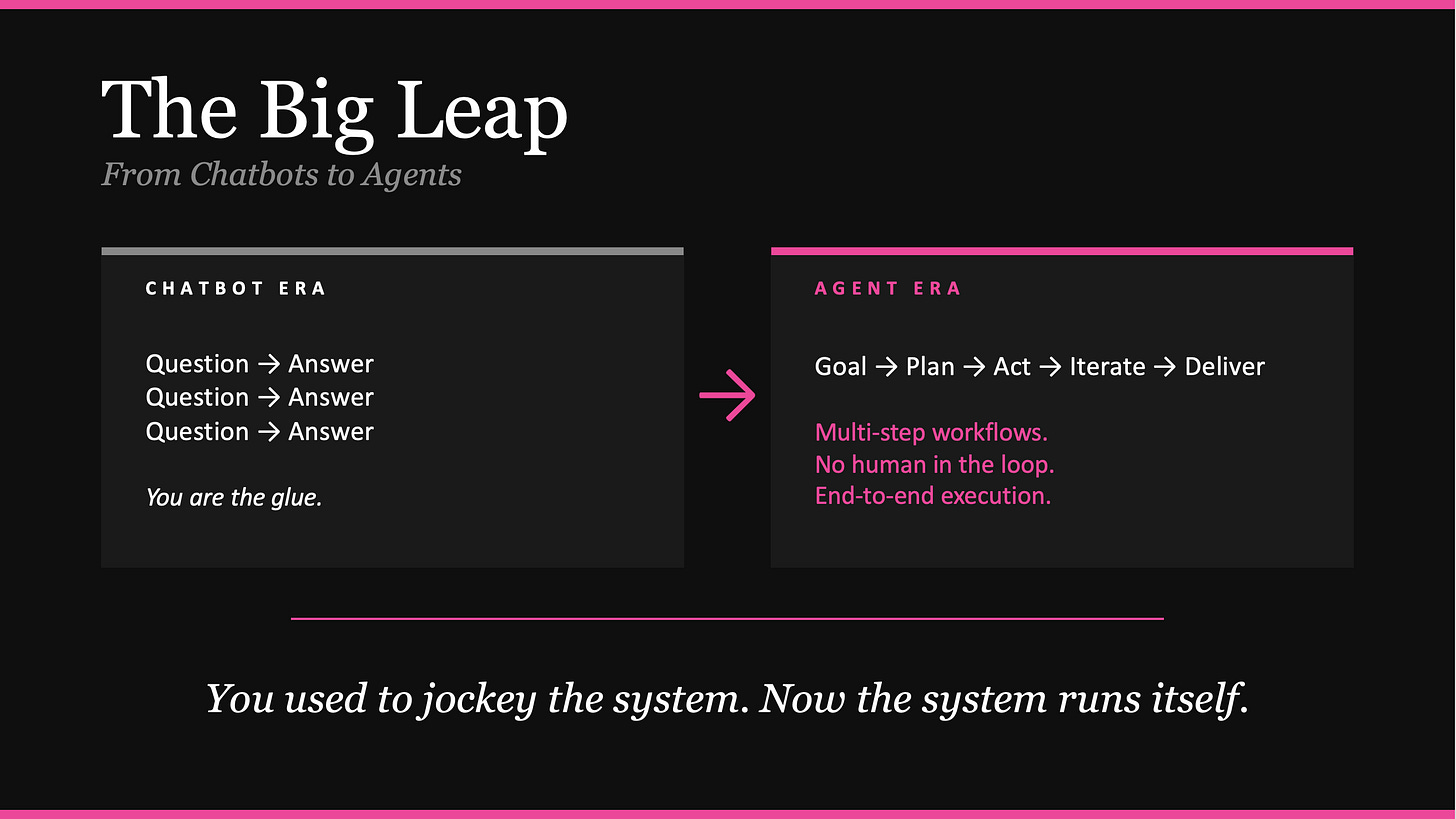

To understand where we are, you need to understand what has changed.

For the past few years, most people have used AI the same way: you open ChatGPT, type a question, get an answer, copy it somewhere, then come back with another question. You are the glue between every step. You prompt. You interpret. You take the output somewhere else. You prompt again. The AI is a tool you jockey, step by step, through a workflow that you manage entirely.

This was the chatbot era, and it’s already over.

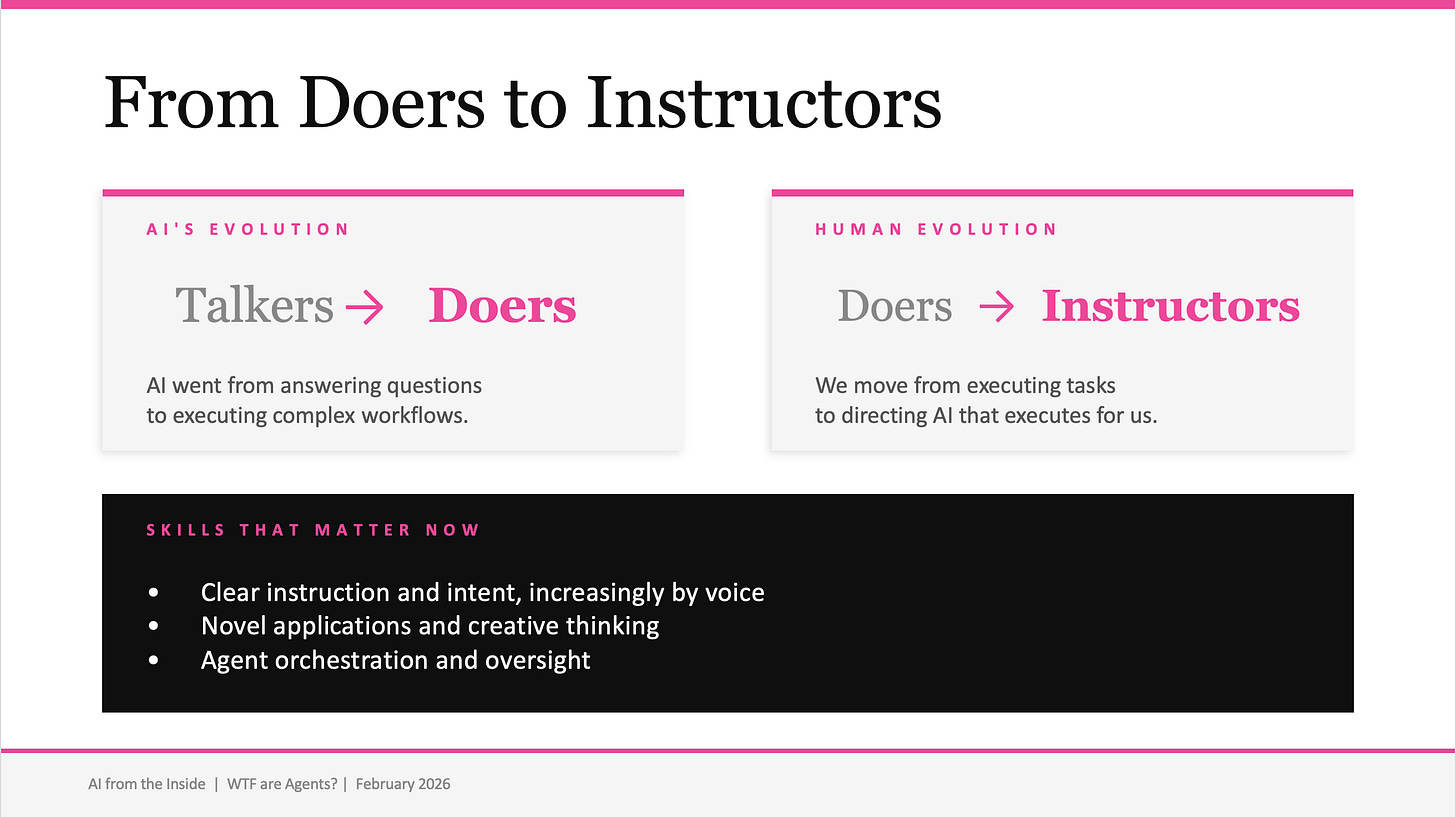

The shift that has happened, quietly but decisively, is from chatbots to agents. An agent does not wait for your next prompt. You give it a goal, provide it with context, and it operates end-to-end. It plans. It reasons through the problem. It calls tools, reads data, writes outputs, checks its own work, and delivers a completed result. The human is no longer the glue between steps. The system can increasingly run itself.

This is not a theoretical leap. It is working in production today.

If I had to pinpoint the tipping point, it was the release of Anthropic’s Claude Opus 4.5 on November 24, 2025. This model represented a step change in reasoning, tool use, and the ability to sustain long-running, complex workflows without falling apart.

Enterprises began trialling Claude’s SDK for complex workloads almost immediately, and the adoption curve has been steep. Paired with Claude Code, which had already become the dominant AI coding tool through 2025, Opus 4.5 gave developers and businesses the engine they needed to move from chatbot interactions to genuinely agentic workflows.

The difference is fundamental. In the old model, you do the work and AI assists. In the new model, AI does the work and you direct. You set the goal and the context. The agent figures out the rest.

Building an Agent is like building a Human

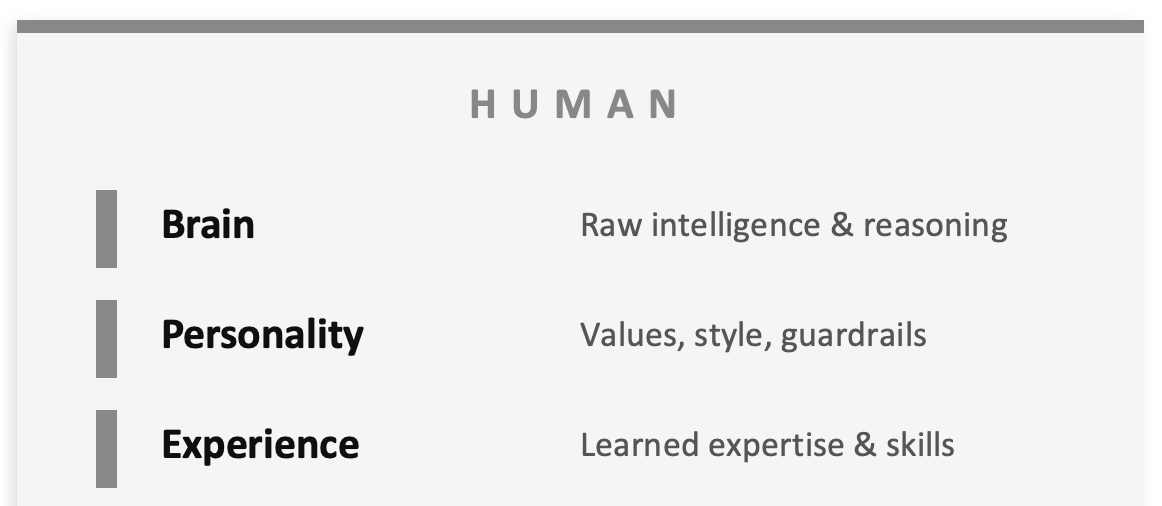

When I explained agents to the group that night, I used an analogy that seemed to make everything click: building an agent is almost identical to building a human workforce.

Think about what we look for when we hire someone.

We put candidates through interviews, background checks, reference calls. We look at their CV. What we are really evaluating comes down to three things:

Their brain: their raw intelligence, their ability to reason through problems, to think critically.

Their personality: their values, their communication style, their professional standards, the guardrails they operate within.

Their experience: the accumulated expertise they have built over years of doing the work, the judgment they bring to complex situations.

These three traits, intelligence, personality, and experience, are what determine whether someone is a fit for the company. Whether they can do the job. Whether they will represent the organisation well.

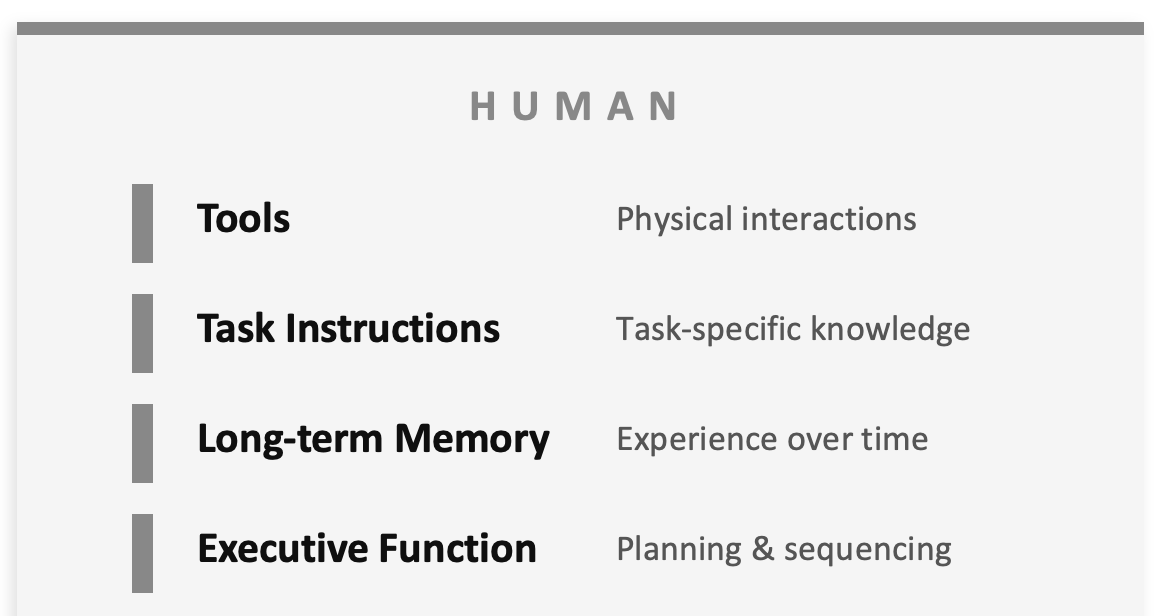

Now consider what happens once we hire them. We onboard them.

We give them tools: a laptop, access to Microsoft 365, Outlook for email, Excel for data, and whatever domain-specific software the organisation uses.

We give them task instructions: here is the client, here is the scope, here is what needs to happen by Friday.

As they work, they build up long-term memory. They learn the quirks of each client. They remember that a particular stakeholder prefers phone calls over email, or that a certain supplier always sends invoices late. This memory becomes incredibly valuable to the company.

And their executive function, their ability to plan, sequence, and execute work, varies from person to person and even day to day, depending on how they feel, how well they can prioritise, and how consistently they perform.

Now let’s put this picture together and flip across to the agent.

Every single one of these traits has a direct parallel.

The brain is the model: the underlying AI that reasons and generates. Claude Opus, Claude Sonnet, GPT, Gemini. Different models have different strengths, just like different people. Not every task needs the most powerful model. Sometimes fast and cheap beats slow and brilliant. You match the brain to the job.

Personality becomes instructions: the system prompt that defines who the agent is, how it behaves, and what its boundaries are. “You are a senior financial controller. Be precise and conservative. Always flag discrepancies over $500. Never auto-approve payments above $10,000.” Without clear instructions, an agent is generic. With well-crafted instructions, it becomes a specialist.

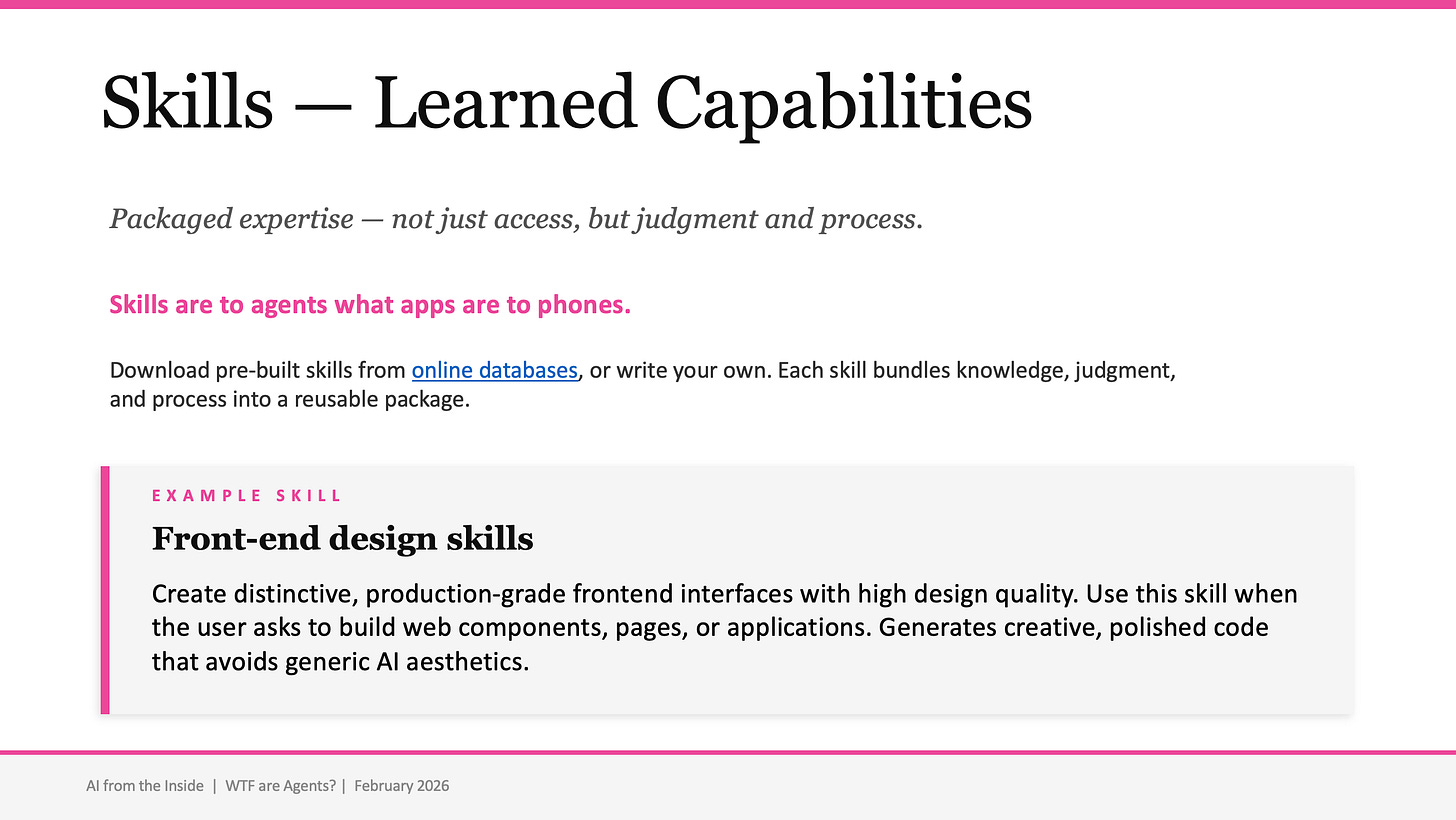

Experience maps to skills: packaged expertise that combines knowledge, judgment, and process. This is where it gets interesting, and where the biggest improvements have happened.

Deep dive #1: Skills

If you have seen The Matrix, you remember the scene. Neo sits in a chair, a cable plugged into his brain, and in seconds he opens his eyes and says: “I know kung fu.” Instant expertise. Uploaded directly into his mind.

Skills for AI agents work exactly like this.

A skill is a markdown file, a structured document that packages a capability and uploads it to the agent’s brain. It bundles the knowledge, the judgment, the process, and the decision-making logic for a specific domain into something the agent can absorb and apply. One moment the agent does not know how to create a professional PowerPoint presentation to your brand standards. You upload the ‘brand guidelines’ skill, and suddenly it does. Not just the mechanics of creating slides, but the design principles, the layout logic, the quality standards.

You can download pre-built skills from online databases, or you can write your own for specific workflows. Each skill extends what the agent can do, in the same way that years of professional experience extend what a human can do, except it happens in seconds rather than years.

This is one of the most significant shifts in agentic AI. Skills are becoming increasingly sophisticated, and the ecosystem around them is growing rapidly. Anthropic introduced a library of skills inside their own product suite recently, some of which crashed the SaaS stock market. The ability to package expertise and plug it into an agent is what turns a clever chatbot into a genuinely helpful worker.

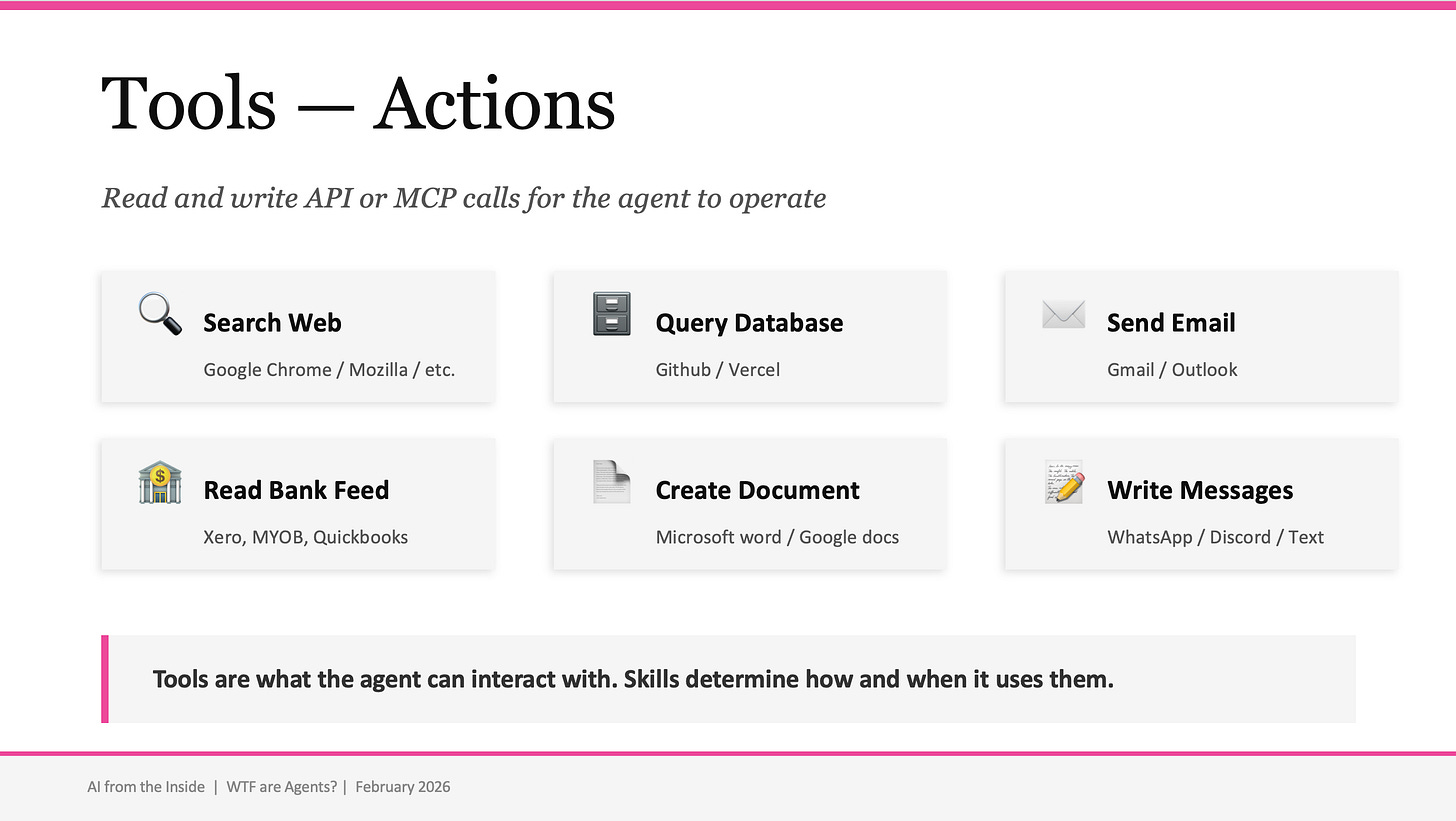

Deep Dive #2: Tools

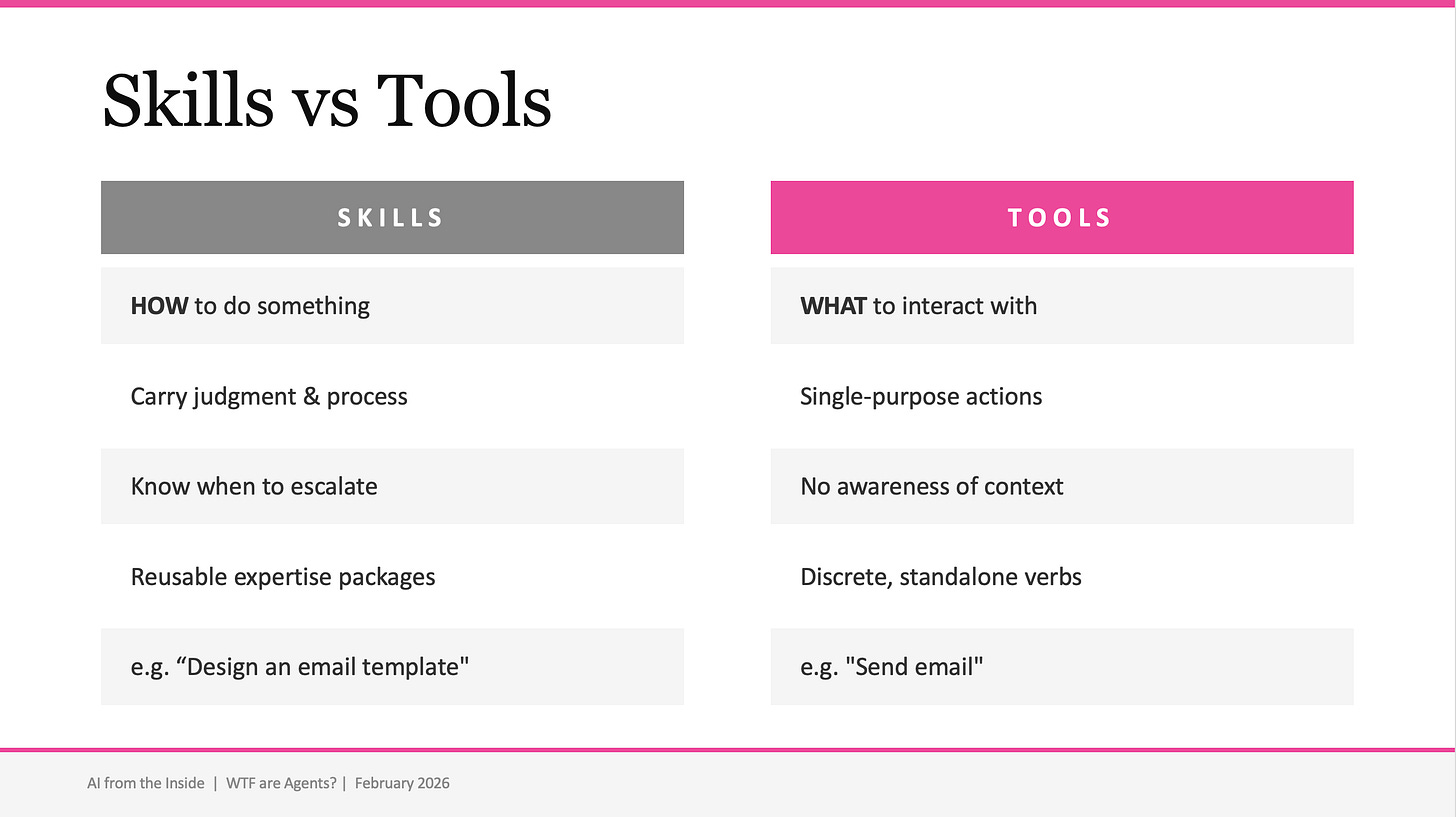

Once you have a skilled agent, it needs the ability to act. That is where tools come in. Tools are the verbs: discrete, single-purpose actions the agent can execute. Search the web. Query a database. Send an email. Read a bank feed. Create a document. Post a message. Each tool does one thing.

What has improved dramatically is the way agents access tools. Through APIs and the Model Context Protocol (MCP), agents can now connect to an expanding universe of external services. Your email, your calendar, your CRM, your accounting software, your file system. And critically, they can chain these tool calls together in sequence, completing long-running processes rather than just responding to a single prompt. This is the heart of what makes an agent an agent.

The critical distinction is this: tools carry no judgment about when or why to use them. A tool is just a capability. The skill decides when and how to use it. “Manage client communications” is a skill. It uses tools like read_email, send_email, and search_crm. But the skill is what determines the tone, the timing, when to follow up, and when to escalate.

It has to be experienced to be believed

I can describe agents all day. But the moment that changed the energy in the room that night was not theory. It was the demo.

While sitting in the library, beers in hand, we built a full working website. A clubhouse prototype for our friendship group. In real time. The group watched as the agent read the spec, planned the build, wrote the code, tested it, and delivered a working product. Not over days or weeks. Right there, while we were talking.

The reaction was exactly what I have seen every time someone experiences this for the first time. A mix of excitement and unease. The kind of feeling that rewires your expectations about what is possible.

I cannot write that experience for you. You need to feel it yourself.

Two tools, in particular, make this accessible for anyone. Both are built on Anthropic’s Claude Agent SDK, which means they share the same agentic engine under the hood.

1. Claude Code: for software builders

Claude Code is a command-line agent for developers. You point it at a specification document, a description of what you want built, and it plans, reasons, writes code, runs tests, fixes errors, and iterates until the job is done. It is not code completion or autocomplete. It is a software engineer (or assistant) that works while you sleep.

I have run Claude Code on complex projects overnight and woken up to 26,000 lines of working code. Not perfect, refinement is always needed, but 90% of the work done autonomously. The shift is fundamental: I did not write the code. I wrote the intent. The agent figured out the rest.

Claude Code has been going viral as people, including many non-programmers, discovered what it could do. Anthropic reported a 5.5x increase in Claude Code revenue by mid-2025, and by the time Opus 4.5 launched in November, it was widely regarded as the best AI coding assistant available.

2. Claude Cowork: for everyone else

In January 2026, Anthropic launched Cowork, and this is the one I want you to pay attention to. Anthropic described it as “Claude Code for the rest of your work,” and that framing is exactly right.

Cowork gives you a tab in the Claude desktop app where you point Claude at a folder on your computer. It can then read, edit, and create files in that folder. You give it a task, it makes a plan, and it works through it step by step, looping you in as it goes.

This is what the shift from chatbot to agent looks like for knowledge workers. You are not going back and forth in a chat window. You are leaving instructions for a colleague and letting them get on with it.

Use cases that are working right now: Synthesising research from multiple documents into a first draft. Reorganising and renaming messy downloads. Producing a presentation from raw notes and data. I built the very presentation I gave that night using Cowork, feeding it my survey data, brand guidelines, speaker notes, and images in a folder, then giving it clear instructions on what to build.

The challenge

If you take one thing from this section, let it be this: go and try it. Sign up to Claude (or your other chosen AI Agent toolkit). Point it at a folder of messy files and ask it to make sense of them. The first time you watch an agent plan its approach, work through your documents, and deliver something you did not have to build yourself, something shifts in your understanding of what is coming.

It feels magical. That is exactly how it should feel.

What does it all mean? The recursive leap

There is a question that kept coming back to me as I prepared for that night: why are all the leading AI labs solving software engineering first?

My initial read was that it was circumstantial. Most of the people employed by these companies are engineers. They are solving their own problems first. Building tools for the work they understand best.

I have updated my view. It is more strategic than that.

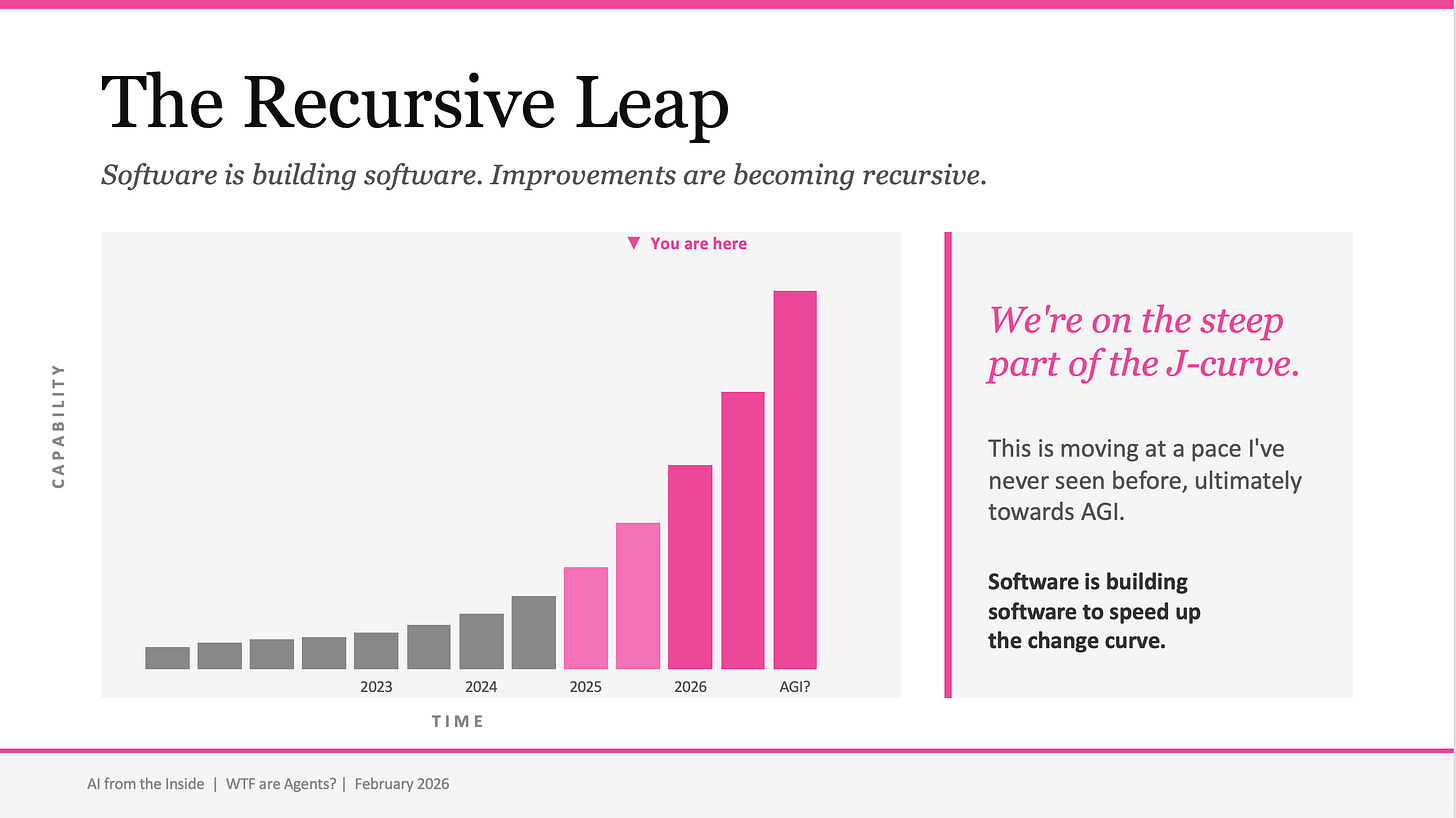

They are solving code first because coding is recursive. Software can build more software. And when software builds better software that then builds even better software, you get a compounding acceleration that is unlike anything we have seen in technology.

The evidence is in the release cycles. Claude Opus 4.5 launched on November 24, 2025. Claude Opus 4.6 launched on February 5, 2026. That is roughly ten weeks between major model releases. Anthropic has been open about the fact that Cowork itself was built primarily by Claude Code in approximately a week and a half. Software building software, building more software.

We are on a j-curve, but what we are actually seeing is a line going almost straight up. New models are going to get released in faster and faster intervals, and the capabilities of those models are going to get better and better with each iteration. Our societal structures are simply not ready for this pace of change. Our education systems, our regulatory frameworks, our professional development models. We are in uncharted territory.

And here is the part that should ground all of this: ChatGPT launched in November 2022. It has been in the market for just over three years. Three years. Look at how far we have come. Now ask yourself: where will this technology be in five years from now?

I do not think most people have fully reckoned with the answer to that question.

The role of humans in this brave new world

So where do humans fit?

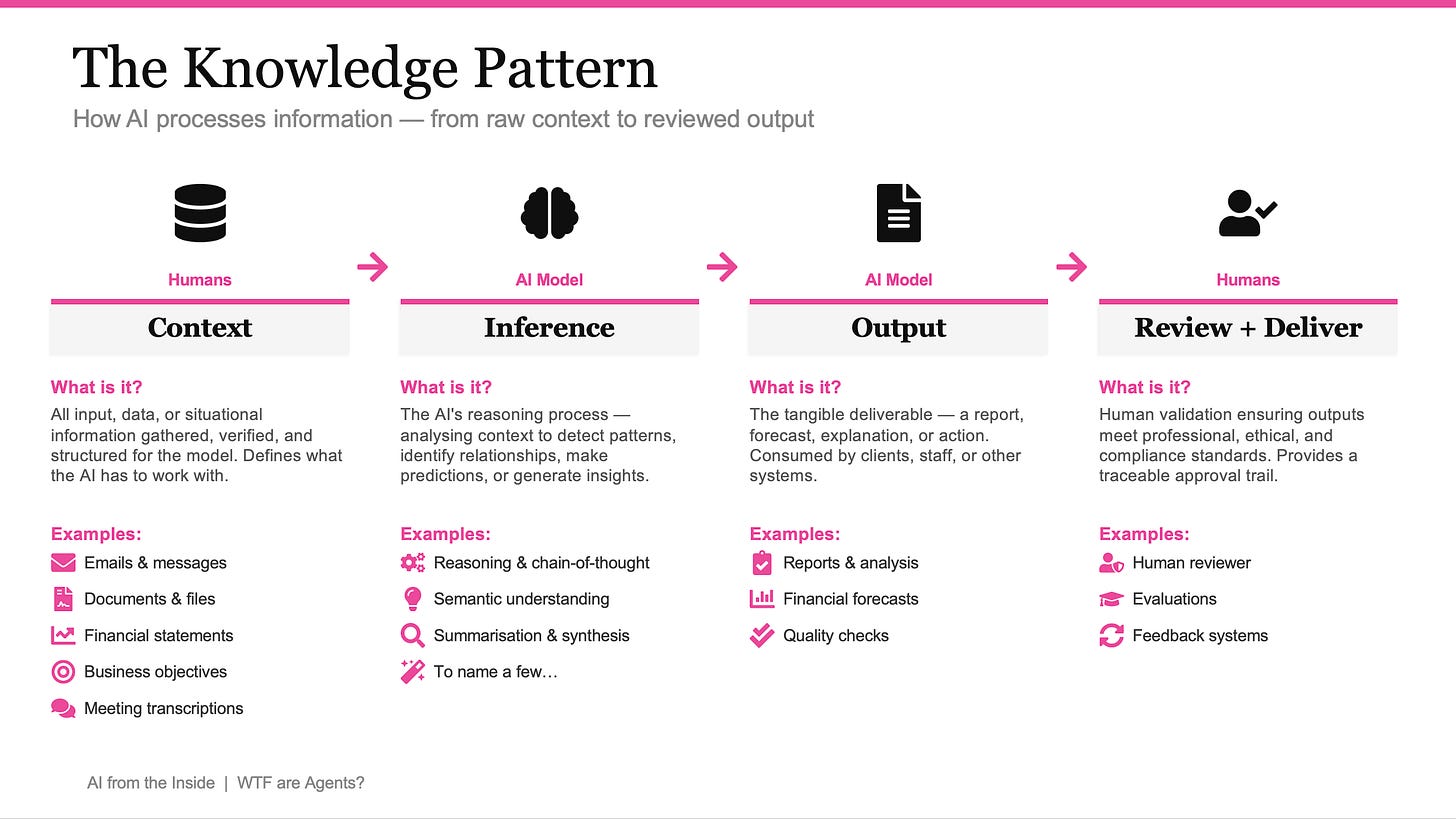

I think about this through what I call the Knowledge Pattern, a framework for how AI processes information in a professional context. It has four stages, and humans bookend the process.

Humans frame the problem and provide the context. AI does the heavy analytical lifting. Humans review, validate, and deliver.

When I think deeply about this, I worry about the people who haven’t figured this out yet and are not aligning their careers and skills to these human-centred tasks. If your value proposition is built on being the person with the answers, the person who can recall technical knowledge and explain it clearly, that moat is filling in fast. AI does that now, and it is only getting better.

I explored this in depth in a previous piece, In the Age of AI, Asking the Right Questions Matters More Than Having the Right Answers. The core argument is that professional value is shifting from recall knowledge to framing, judgment, context, and questioning. The professionals who lean into these skills will differentiate. The ones who stay anchored to being the source of answers will feel increasing pressure.

The opportunity is real, but it requires intentional action. You need to develop the skills that sit on the human side of the Knowledge Pattern: context engineering, quality judgment, creative framing, and the ability to direct AI rather than compete with it.

The skills that matter are shifting. The professionals who recognise this early and act on it will not just survive the transition, they will define what great looks like on the other side.

The closing thought

I will leave you with the same three pieces of advice I left my mates with that night.

1. Be curious. Get uncomfortable.

This world is evolving faster than any technology shift in living memory. The people who will thrive are the ones who lean into the discomfort of not knowing, who experiment, who treat every new tool as an opportunity to learn rather than a threat to resist. Curiosity is your most valuable asset right now. Use it.

2. Experience what working with an agent feels like.

You cannot understand this by reading about it. You need to feel it. Sign up to Claude. Use Cowork. Point it at a folder on your desktop and give it a real task. The first time you watch an agent produce work that is genuinely better than what you expected, something changes in how you think about the future. It is equal parts magical and terrifying. That is the point.

3. Align your career, and yourself, to the future.

Find an employer who will give you opportunities to develop the skills that are going to remain human: context engineering, creative problem-solving, judgment, orchestration, and the ability to direct intelligent systems toward meaningful outcomes. These are the skills that will keep you relevant, and valuable, in the years ahead. Do not wait for someone to hand you this opportunity. Go and create it.

If this resonated, share it with someone who is navigating the same shift. And if you are experimenting with agents, I want to hear about it. Hit reply and tell me what you are building.

Thomas

Brilliant TP!

Brilliant presentation and write up Thomas. You explained everything about agents so clearly and informative. Thank you very much.